-By Saswati Banerjee

For all the talk around artificial intelligence, very little of it begins with people.

We hear about models, automation, scale. We hear about how AI is transforming the future of work. What we don’t hear enough about is the human effort that makes any of it possible in the first place.

The careful labeling of images. The repetitive tagging of data. The judgment calls that teach machines what matters and what does not.

That gap between perception and reality is exactly where Humans in the Loop: Screening and Conversation on AI, Work & Inclusion chose to sit.

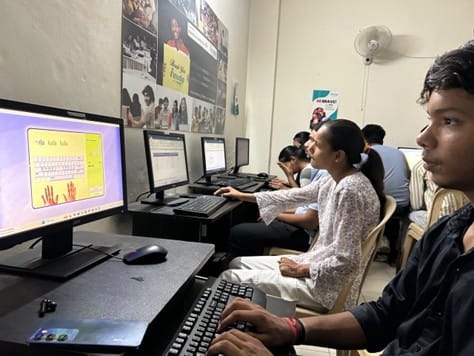

Hosted by Anudip Foundation at IIIT Hyderabad, the evening did not try to simplify AI. It did something more difficult. It slowed the conversation down and turned it toward the people who are usually left out of it.

Where the Conversation Actually Began

It started on a note that felt both technical and deeply human at the same time.

Sandip Shukla did not take long to get to the point. “AI annotation must be contextual and culturally specific,” he said, almost as a reminder rather than a provocation. The line hung in the room for a moment. It reframed something many people tend to overlook. Data is not neutral. The way it is labeled, interpreted, and fed into systems carries the imprint of the people doing that work. Strip away context, and what you are left with is not intelligence, just approximation.

That thought quietly set the direction for everything that followed.

When Radha Basu took the stage, she did not move away from it. She went deeper. Instead of speaking about technology in isolation, she brought it back to people, to access, to the question of who gets to participate in this moment. “Our role is not to create capability, but to enable it,” she said. “If you provide access, everything else follows.”

It did not sound like a grand statement. If anything, it was delivered with a kind of clarity that made it feel obvious. And yet, it shifted the lens. The conversation was no longer about whether underserved communities can be part of the AI economy. It became about what stands in the way, and what happens when that barrier is removed.

Between those two ideas, one about context, the other about access, the tone for the evening was set without needing to say so explicitly.

A Film That Holds Up a Mirror

Humans in the Loop centres on an Adivasi woman from Jharkhand navigating the invisible world of AI data work. She labels images. She annotates data. Her labour feeds into machine learning systems she may never see, for products she may never use. The film asks, quietly but insistently, who counts in the story of artificial intelligence and whose work goes unacknowledged.

It is not an easy film to sit with. That was precisely the point.

Aranya Sahay spoke about the film in a way that felt unhurried, almost like he was still piecing it together as he went. He mentioned coming across a report by Karishma Mehrotra on Adivasi women in Jharkhand working as data labelers. What stayed with him was not just the story, but the question that followed. What do you do with something like this?

The answer, as it turned out, was not immediate. He spoke about sitting with it, letting it take shape. At some point along the way, a comparison began to make sense to him. “Data labeling, in its repetition and care, is much like parenting,” he said.

When the film ended, the applause did not feel forced or polite. It carried on a little longer than usual. Maybe because the room was full of people who understood the system being shown on screen, and still saw something in it they had not quite looked at this way before.

The Four Women Who Made It Real

The shift in the room was almost immediate when the conversation turned to the four women. Until then, it had been about ideas, systems, the larger shape of things. Now it was about lived experience. Monisha Banerjee and Santanu Paul did not so much “moderate” this part as open the space and let the stories come through. The film had just ended. Its echoes were still there. And then, almost seamlessly, those echoes found real voices.

Anita Kachhap spoke first. She did not dramatize her past, but you could sense the weight of it. A time when her world had narrowed to caregiving, to getting through each day, to managing without support. From there to where she stands now is not a straight line. Today, she works at iMerit as a Senior IT and ITeS Executive, contributing to systems that deal with financial risk. It is precise work, the kind that demands attention and trust. What stayed, though, was not the designation. It was something she said about her daughters. They watch her leave for work, she shared, and talk about wanting the same future. Not just a job, but this particular world. AI. Technology. That kind of aspiration does not appear out of nowhere.

Khushboo Parveen’s story unfolded differently, though it carried its own quiet intensity. Growing up, the goal was simple. Earn. Support the family. There was not much room to think beyond that. Today, she works on agricultural AI projects, building models that help identify crops and weeds, work that connects her to teams and outcomes far beyond her immediate surroundings. Listening to her, you got the sense that the shift was not just professional. It was in how she placed herself in the world. From managing constraints to working at the edge of something evolving.

Rashmika spoke about a period many can relate to, but few recover from quickly. When the pandemic hit, her father’s daily-wage work stopped. Income disappeared. Plans stalled. It could have gone in many directions from there. Instead, she found her way into training, and then into a role at Wells Fargo, working in fraud and claims operations. It is the kind of work where decisions matter, where systems are constantly being tested and refined. There was no sense of spectacle in how she spoke about it. Just a steady acknowledgment of what it took to get there.

Sakshi Pandey’s journey carried a different set of challenges. Leaving a remote village in Gumla and stepping into a city like Hyderabad is not just a change of place. It is a shift in language, pace, expectations. Everything feels unfamiliar at first. She spoke about that adjustment without overstating it. You could fill in the gaps yourself. Today, she works as a Finance Analyst at Deloitte. It sounds straightforward when said like that, but it rarely is.

What connected all four was not just where they had arrived, but the distance they had traveled to get there. There was a noticeable change in how they spoke about themselves. Not hesitant, not overly rehearsed. Just certain. And that certainty seemed to ripple outward. Into families, into younger siblings, into children who are now growing up with a slightly altered sense of what is possible.

Nothing about their stories felt abstract. They were specific, grounded, and, in their own way, unfinished. Which is perhaps what made them stay with you.

The Invisible Labour Question

One of the most important ideas the event surfaced was the concept of invisible labour in the digital workforce. Data annotation and AI training are foundational to every AI product that exists. Without the people who label images, transcribe audio, and categorize information at scale, the large language models and computer vision systems that power modern technology would not function. Yet the workers doing this labour are almost never visible in the public conversation about the future of work.

The film made that invisibility visible. The panel made it personal. Together, they created something unusual: a space where the abstract language of digital workforce development gave way to something more truthful.

This is also where the question of algorithmic bias becomes most urgent. When the people annotating data come from a narrow band of backgrounds, the AI systems trained on that data reflect those limitations. Building a more diverse and representative data workforce is not just a social good. It is a technical necessity for AI that actually works equitably